This is a very beautiful sample problem from ISI MStat PSB 2009 Problem 6. It is based on the idea of Restricted Maximum Likelihood Estimators, and Mean Squared Errors. Give it a Try it !

Suppose \(X_1,.....,X_n\) are i.i.d. \(N(\theta,1)\), \(\theta_o \le \theta \le \theta_1\), where \(\theta_o < \theta_1\) are two specified numbers. Find the MLE of \(\theta\) and show that it is better than the sample mean \(\bar{X}\) in the sense of having smaller mean squared error.

Maximum Likelihood Estimators

Normal Distribution

Mean Squared Error

This is a very interesting Problem ! We all know, that if the condition "\(\theta_o \le \theta \le \theta_1\), for some specified numbers \(\theta_o < \theta_1\)" had been not given, then the MLE would have been simply \(\bar{X}=\frac{1}{n}\sum_{k=1}^n X_k\), the sample mean of the given sample. But due to the restriction over \(\theta\) things get interestingly complicated.

So, simplify a bit, lets write the Likelihood Function of \(theta\) given this sample, \(\vec{X}=(X_1,....,X_n)'\),

\(L(\theta |\vec{X})={\frac{1}{\sqrt{2\pi}}}^nexp(-\frac{1}{2}\sum_{k=1}^n(X_k-\theta)^2)\), when \(\theta_o \le \theta \le \theta_1\)ow taking natural log both sides and differentiating, we find that ,

\(\frac{d\ln L(\theta|\vec{X})}{d\theta}= \sum_{k=1}^n (X_k-\theta) \).

Now, verify that if \(\bar{X} < \theta_o\), then \(L(\theta |\vec{X})\) is always a decreasing function of \(\theta\), [ where, \(\theta_o \le \theta \le \theta_1\)], Hence the maximum likelihood attains at \(\theta_o\) itself. Similarly, when, \(\theta_o \le \bar{X} \le \theta_1\), the maximum likelihood attains at \(\bar{X}\), lastly the likelihood function will be increasing, hence the maximum likelihood will be found at \(\theta_1\).

Hence, the Restricted Maximum Likelihood Estimator of \(\theta\), say

\(\hat{\theta_{RML}} = \begin{cases} \theta_o & \bar{X} < \theta_o \\ \bar{X} & \theta_o\le \bar{X} \le \theta_1 \\ \theta_1 & \bar{X} > \theta_1 \end{cases}\)

Now, to check that, \(\hat{\theta_{RML}}\) is a better estimator than \(\bar{X}\), in terms of Mean Squared Error (MSE).

Now, \(MSE_{\theta}(\bar{X})=E_{\theta}(\bar{X}-\theta)^2=\int^{-\infty}_\infty (\bar{X}-\theta)^2f_X(x)\,dx\)

\(=\int^{-\infty}_{\theta_o} (\bar{X}-\theta)^2f_X(x)\,dx+\int^{\theta_o}_{\theta_1} (\bar{X}-\theta)^2f_X(x)\,dx+\int^{\theta_1}_\infty (\bar{X}-\theta)^2f_X(x)\,dx\).

\(\ge \int^{-\infty}_{\theta_o} (\theta_o-\theta)^2f_X(x)\,dx+\int^{\theta_o}_{\theta_1} (\bar{X}-\theta)^2f_X(x)\,dx+\int^{\theta_1}_\infty (\theta_1-\theta)^2f_X(x)\,dx\)

\(=E_{\theta}(\hat{\theta_{RML}}-\theta)^2=MSE_{\theta}(\hat{\theta_{RML}})\).

Hence proved !!

Now, can you find an unbiased estimator, for \(\theta^2\) ?? Okay!! now its quite easy right !! But is the estimator you are thinking about is the best unbiased estimator !! Calculate the variance and also compare weather the Variance is attaining Cramer-Rao Lowe Bound.

Give it a try !! You may need the help of Stein's Identity.

In 2025, 8 students from Cheenta Academy cracked the prestigious Regional Math Olympiad. In this post, we will share some of their success stories and learning strategies. The Regional Mathematics Olympiad (RMO) and the Indian National Mathematics Olympiad (INMO) are two most important mathematics contests in India.These two contests are for the students who are […]

Cheenta Academy proudly celebrates the success of 27 current and former students who qualified for the Indian Olympiad Qualifier in Mathematics (IOQM) 2025, advancing to the next stage — RMO. This accomplishment highlights their perseverance and Cheenta’s ongoing mission to nurture mathematical excellence and research-oriented learning.

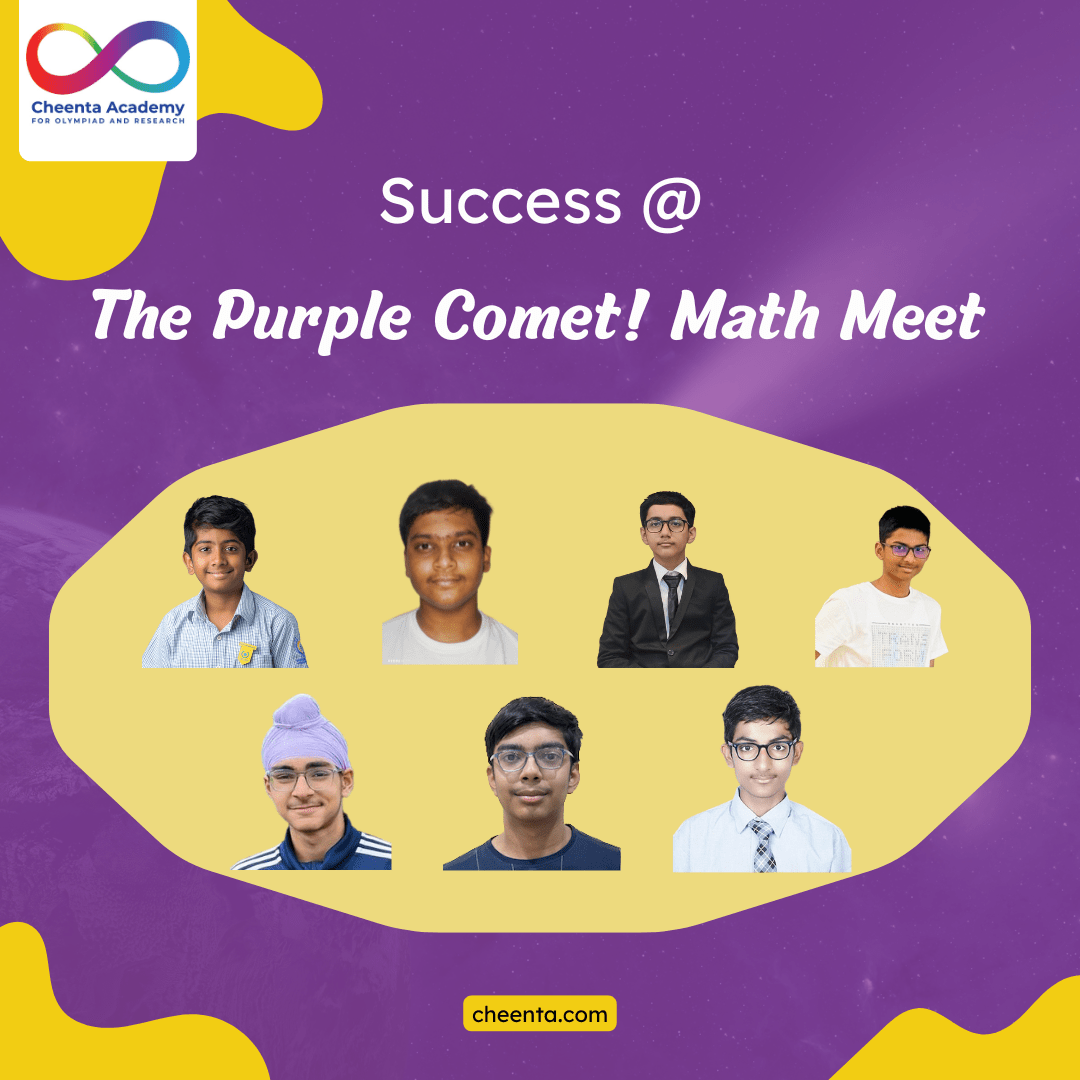

Cheenta students shine at the Purple Comet Math Meet 2025 organized by Titu Andreescu and Jonathan Kanewith top national and global ranks.

Celebrate the success of Cheenta students in the Stanford Math Tournament. The Unified Vectors team achieved Top 20 in the Team Round.